Claude Banned by Anthropic

On April 10, 2026, Peter Steinberger, the founder of OpenClaw, announced on X that it would become increasingly difficult for OpenClaw to remain compatible with Anthropic’s models. He shared a screenshot of an email from Claude.

The email indicated that Anthropic had revoked access to Claude for the account due to “suspicious signals” and alleged policy violations. The email stated:

Hello,

After an internal investigation regarding suspicious signals associated with this account, we have determined that it violated our usage policies.

Therefore, access to Claude has been revoked.

If you wish to appeal this decision, please fill out this form or learn more about the appeal process here.

Regards, Anthropic Security Team

The post quickly gained traction on X, drawing attention to the escalating tensions between Anthropic and OpenClaw.

Responses to the post were overwhelmingly critical:

-

Several developers pointed out, “Imagine building a business on Anthropic’s model.” They noted that Anthropic wants developers to build on their platform while retaining the right to ban accounts without explanation.

-

Some users bluntly called it a “classic Anthropic move,” stating that “OpenClaw + local LLM has become the only rational path” and that open-source models are eating their lunch.

-

Users shared similar experiences of being banned: “I just ran Claude -p with a script and got banned, appealed for 10 days with no response.”

-

Others mocked, “Anthropic is playing the 80s-90s Microsoft playbook, building walls without giving back to the ecosystem.”

-

Many developers emphasized that this incident highlights the risks of relying on closed AI APIs, urging a shift towards open-source models and local deployment to avoid sudden supply interruptions.

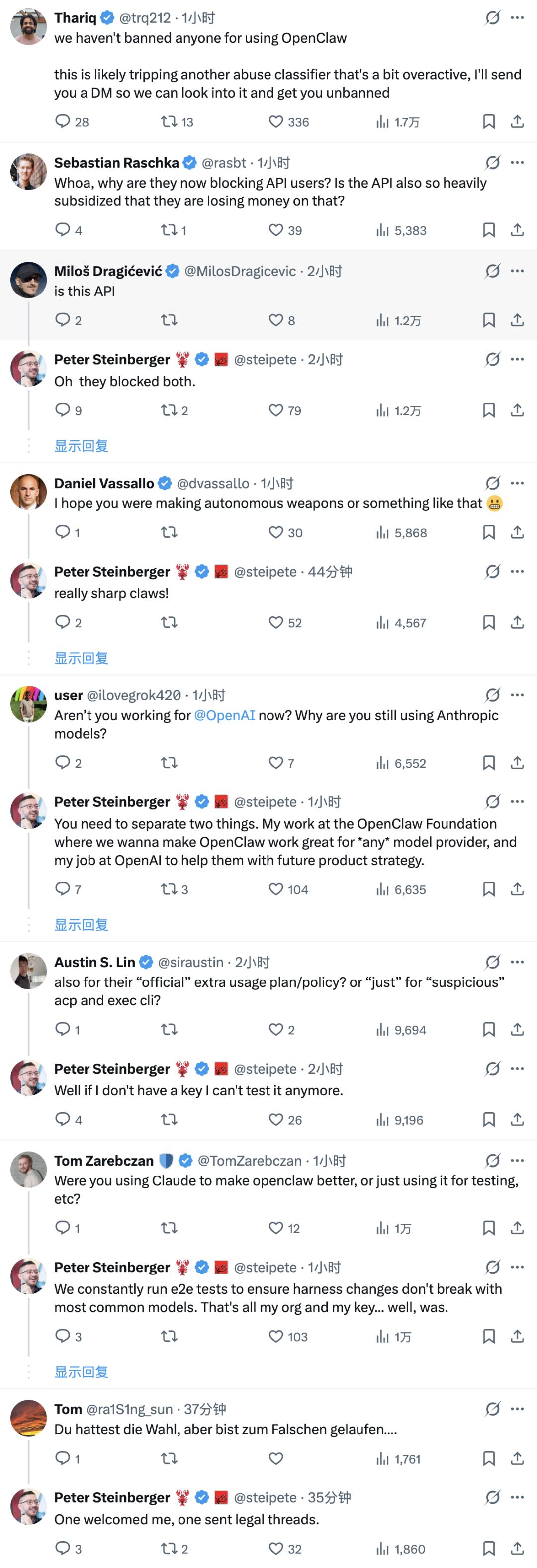

However, the situation did not confirm that “Anthropic directly banned OpenClaw.”

Anthropic publicly denied banning accounts due to OpenClaw, but Peter’s account and key were indeed suspended, indicating that the core issue between the two parties is not merely about access but whether third-party agent tools can continue to connect in a stable and low-risk manner.

In the same discussion thread, Anthropic employee Thariq stated that the company “did not ban anyone for using OpenClaw” and suggested that the ban was likely a misclassification by an overly aggressive abuse detection system, promising further investigation via private messages.

At least from the public statements, Anthropic has not directly classified “using OpenClaw” as a violation, but the related automated calling patterns have evidently entered a high-sensitivity area of platform risk control.

The background of this controversy is that just days prior, Anthropic had significantly tightened the usage of third-party tools accessing Claude. Starting April 4, Claude subscription limits will no longer cover calls made through third-party tools, including OpenClaw. Users wishing to continue using such tools will need to either purchase discounted additional usage bundles or switch to using the Claude API key.

Boris Cherny, head of Anthropic Claude Code, explained that the usage patterns of third-party tools were not what the subscription product was initially designed to support, and the platform needs to prioritize the capacity needs of its own products and API users.

Business Insider further cited Anthropic’s statement that using a Claude subscription to drive third-party tools violates the terms of service and that such tools impose “excessive pressure” on the system.

Peter Steinberger argued that many users subscribed to Claude specifically for OpenClaw, and cutting off this pathway would be detrimental to both parties. He mentioned that he and OpenClaw Foundation director Dave Morin had attempted to communicate with Anthropic, but the only outcome was a one-week delay in policy implementation.

On the surface, this is a dispute over account bans; however, at a deeper level, it reflects the structural conflicts between agent platforms and model vendors that are intensifying.

The core value of products like OpenClaw lies in the high-frequency, multi-turn, and continuous automation of large model calls, assisting users with complex tasks such as managing emails, schedules, and flight check-ins.

From the model platform’s perspective, this usage consumes more computing power, is more likely to trigger risk control and abuse detection, and could potentially bypass official product pathways, impacting existing billing and capacity management systems.

The challenges faced by OpenClaw are no longer just about whether it can technically connect to Claude, but whether it can continue to connect to Claude in a stable, compliant, and cost-effective manner as Anthropic continues to tighten its boundaries.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.